Sample Efficient Adaptive Text-to-Speech

Yutian Chen, Yannis Assael, Brendan Shillingford, David Budden, Scott Reed, Heiga Zen, Quan Wang, Luis C. Cobo, Andrew Trask, Ben Laurie, Caglar Gulcehre, Aäron van den Oord, Oriol Vinyals, Nando de Freitas

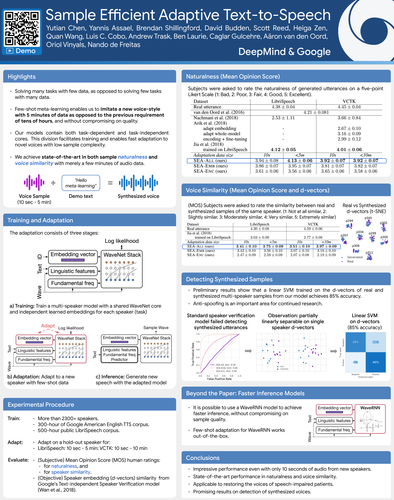

We present a meta-learning approach for adaptive text-to-speech (TTS) with few data. During training, we learn a multi-speaker model using a shared conditional WaveNet core and independent learned embeddings for each speaker. The aim of training is not to produce a neural network with fixed weights, which is then deployed as a TTS system. Instead, the aim is to produce a network that requires few data at deployment time to rapidly adapt to new speakers. We introduce and benchmark three strategies: (i) learning the speaker embedding while keeping the WaveNet core fixed, (ii) fine-tuning the entire architecture with stochastic gradient descent, and (iii) predicting the speaker embedding with a trained neural network encoder. The experiments show that these approaches are successful at adapting the multi-speaker neural network to new speakers, obtaining state-of-the-art results in both sample naturalness and voice similarity with merely a few minutes of audio data from new speakers.

Example imitated utterances for each approach

Description

Each row shows a demonstration waveform followed by three imitation approaches

given the same text.

Demonstration waveforms are 5 minutes long (for VCTK)

or 10 minutes long (for Librispeech).

Sentence spoken

Here are some pages for who sells toms shoes.

| Real demonstration utterance | Adapt speaker embedding and finetune network | Adapt speaker embedding (only) | Encoder network |

|---|---|---|---|

| Librispeech 2300 | |||

| Librispeech 3575 | |||

| Librispeech 7729 | |||

| VCTK Speaker p301 | |||

| VCTK Speaker p318 | |||

| VCTK Speaker p360 |

Description

Each row shows a demonstration waveform followed by three imitation approaches

given the same text.

Demonstration waveforms are 5 minutes long (for VCTK)

or 10 minutes long (for Librispeech).

Sentence spoken

Modern birds are classified as coelurosaurs by nearly all palaeontologists..

| Real demonstration utterance | Adapt speaker embedding and finetune network | Adapt speaker embedding (only) | Encoder network |

|---|---|---|---|

| Librispeech 2300 | |||

| Librispeech 3575 | |||

| Librispeech 7729 | |||

| VCTK Speaker p301 | |||

| VCTK Speaker p318 | |||

| VCTK Speaker p360 |

Description

Each row shows a demonstration waveform followed by three imitation approaches

given the same text.

Demonstration waveforms are 5 minutes long (for VCTK)

or 10 minutes long (for Librispeech).

Sentence spoken

There were many editions of these works still being used in the 19th century..

| Real demonstration utterance | Adapt speaker embedding and finetune network | Adapt speaker embedding (only) | Encoder network |

|---|---|---|---|

| Librispeech 2300 | |||

| Librispeech 3575 | |||

| Librispeech 7729 | |||

| VCTK Speaker p301 | |||

| VCTK Speaker p318 | |||

| VCTK Speaker p360 |

Description

Each row shows a demonstration waveform followed by three imitation approaches

given the same text.

Demonstration waveforms are 5 minutes long (for VCTK)

or 10 minutes long (for Librispeech).

Sentence spoken

The town is further intersected by numerous small canals with tree-bordered quays..

| Real demonstration utterance | Adapt speaker embedding and finetune network | Adapt speaker embedding (only) | Encoder network |

|---|---|---|---|

| Librispeech 2300 | |||

| Librispeech 3575 | |||

| Librispeech 7729 | |||

| VCTK Speaker p301 | |||

| VCTK Speaker p318 | |||

| VCTK Speaker p360 |

Effect of demo waveform length on quality

Description

Comparison of different lengths of demonstration utterances.

Uses the first approach above: both adapt the speaker embedding and finetune the network.

Sentence spoken

Here are some pages for who sells Toms shoes.

| Real demonstration utterance | Using 10 seconds of demo waveform | Using 1 minute of demo waveform | Using 5 minutes (LibriSpeech) / 10 minutes (VCTK) of demo waveform |

|---|---|---|---|

| Librispeech 2300 | |||

| Librispeech 3575 | |||

| Librispeech 7729 | |||

| VCTK Speaker p301 | |||

| VCTK Speaker p318 | |||

| VCTK Speaker p360 |